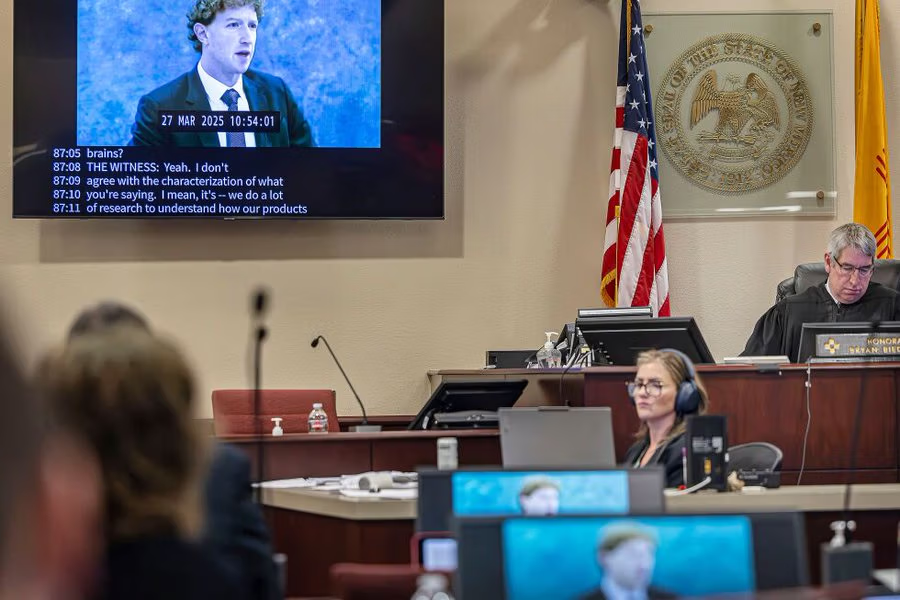

A New Mexico court has ordered Meta Platforms to pay $375 million in civil penalties after a jury found the company misled users about the safety of its platforms for children, in a landmark case that could reshape how social media companies are held accountable.

The verdict follows a seven-week trial in which jurors concluded that Meta, which owns Facebook, Instagram, and WhatsApp, violated the state’s Unfair Practices Act by failing to adequately protect young users from harmful content and predatory behavior.

New Mexico Attorney General Raul Torrez described the ruling as “historic,” saying it marks the first successful state-level action against Meta over child safety concerns.

“Meta executives knew their products harmed children, disregarded warnings from their own employees, and lied to the public,” Torrez said.

The case centered on allegations that Meta’s platforms exposed minors to sexually explicit material and allowed contact with sexual predators, with prosecutors arguing that the company’s recommendation algorithms amplified such risks.

During the trial, jurors reviewed internal company documents and heard testimony from former employees, including whistleblower Arturo Béjar, who said experiments conducted on Instagram showed underage users were regularly served sexualized content. He also testified that his own daughter had been approached by a stranger on the platform.

Prosecutors cited internal research indicating that a significant share of users had encountered unwanted nudity or sexual content within a short period, underscoring what they described as systemic issues in content moderation.

The $375 million penalty reflects thousands of violations identified by the jury, each carrying a maximum fine under state law.

Meta said it disagrees with the verdict and intends to appeal. A company spokesperson said the firm continues to invest in safety tools and highlighted features such as “Teen Accounts” and parental alerts designed to limit exposure to harmful content.

The ruling comes as Meta faces mounting legal pressure in the United States, with thousands of similar lawsuits alleging that its platforms are designed to harm young users. A separate case in Los Angeles is examining claims that social media platforms contributed to addiction among minors.

The outcome of the New Mexico case is likely to intensify scrutiny of social media companies and could influence future regulation, particularly around how platforms design algorithms and safeguard younger users.